Mahesh Nirmalan MD, FRCA, PhD, FFICM and Roshan Ragel PhD

Professor Mahesh Nirmalan is Associate Vice President for Responsible Research Practice at the University of Manchester, UK and Professor Roshan Ragel is Professor of Computer Engineering at the University of Peradeniya, Sri Lanka

How we choose to conceptualise Artificial Intelligence (AI) is one of the cornerstones of the current debate on the ethics of AI. In this context, do we see AI as a tool that has been developed by humans to meet human needs – an instrument like a hammer or a washing machine, or do we see AI as a post-human entity and an independent moral agency is relevant? The former position, where we see AI as merely an advanced tool is commonly referred to as ‘Instrumentalism’ and the latter - where we see AI as an independent agent that can make independent moral judgements, and therefore can do wrong (a moral agent) or something that can be wronged (a moral patient) is referred to as ‘Relationalism’. The current discourses surrounding the ethics of AI sit in a spectrum between these two primary frameworks – “Instrumentalism Vs Relationalism”. Where AI is positioned along this spectrum is not merely a philosophical question as it directly shapes how responsibility, liability, and governance should be assigned when AI systems are deployed.

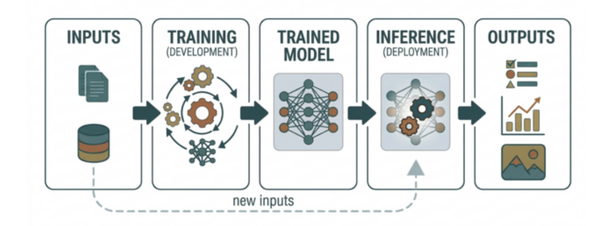

Fundamentally, AI is about creating machines – mostly computers and computer algorithms- that are capable of intelligent behaviour. This leads to the question as to how we define the term ‘Intelligence’. At its core, intelligence, or intelligent behaviour, is about the ability to perceive information from external sources, process it, and produce appropriate responses (or outputs) that are consistent with the processed information. When defined in this manner, it becomes a generic attribute, not limited by human values. Even lower forms of life – single-cell organisms like an amoeba or multicellular life forms such as worms, that are capable of perceiving information, processing it (in their rudimentary nervous systems) and producing appropriate responses, are in fact displaying intelligent behaviour. Machines or robotic systems created by humans equipped with similar abilities are said to possess artificial intelligence. Importantly however, despite possessing such a ‘functional intelligence’, AI systems clearly lack consciousness, free will, emotions, intent, or moral awareness and this distinction is important when considering the position of AI in a modern society.

It is, however, common to see human relationships with AI undergoing a rapid transition, with many users voluntarily granting AI systems a higher status than any other machine we have encountered in human history. In interactions with tools such as ChatGPT, Deepseek, MS Co-Pilot or Google Gemini, it is not uncommon to see the use of polite terminology - such as ‘please’ or ‘thank you’, that have thus far been reserved for communication with other life forms. Whether these changes are an effort to protect users’ own humanity or truly represent a fundamental shift in how we perceive AI is hard to determine at this stage. But there is no doubt that the relationship between humans and AI is evolving. Part of this shift reflects a natural human tendency to anthropomorphise complex systems, attributing agency and personality even though they operate purely through statistical pattern recognition. These observed behaviours no doubt will continue to evolve further as AI systems get even more sophisticated and the ongoing attempts to create Artificial General Intelligence (AGI) - capable of carrying out any cognitive task that humans can, reach further maturity.

Given the transitional stage we are currently in, how institutions including schools and universities should view the ethical principles that govern the use of AI in education and research needs further consideration. If we view AI through the eyes of “Instrumentalism”, this question becomes relatively easy to navigate. If AI is yet another instrument in our armamentarium, from an ethical perspective we would be justified in viewing it as one of the many other instruments we deploy in our daily lives. It would be no different to how we would for example, view the use of the hammer, the internet or the mass-spectrometer. The use of these instruments is permitted at the user's discretion, with the user taking full responsibility for ensuring that their use complies with the major pillars of ethics. From this perspective, responsibility for ethical outcomes remains firmly with the human actors — the users who deploy the systems, the institutions that govern their use, and the organisations that design and distribute the technology. In other words, the users will ensure that any output generated by these instruments protects the autonomy, best interests (or beneficence), freedom from harm (non-maleficence), and justice for all subjects who could be affected by the output. Structural or systematic breach of these fundamental conditions arising through the flaws in design will fall within the remits of the manufacturers. One could even take the view that within an ‘Instrumentalist’ framework, no fundamentally new ethical principles are needed in the governance of AI applications in teaching, learning or research.

The ability to hold organisations accountable for their actions is a crucial part of governance. The ‘Instrumentalist’ approach also ensures that the tech companies developing and validating AI applications are held fully accountable for the validity and safety of their products. In medical applications for example, just as much as the manufacturers of medicines and other medical equipment can be held liable for any systematic harm that can arise from faulty design, the tech companies can be held accountable for any harm patients may suffer as a result of wrong datasets being used in the development and validation of AI algorithms. The manufacturers will therefore need to ensure that the datasets used are created and validated, taking into account demographic diversity, age/gender distributions, and genetic variation in society.

It is however true that how advanced AI systems integrate sets of data and the diverse methods they use in the analyses of such datasets are not always obvious, even to the most diligent system designers. AI can, and certainly will, design improved versions of itself, which in turn will design even smarter versions of itself repetitively and iteratively, resulting in an “intelligence explosion” through recursive self-improvement. It is also possible that human brains could be scanned, modelled, reproduced and then connected to robotic bodies by advanced systems. The current state of AI technology is virtually knocking at the doors of such possibilities and how such systems will operate is impossible to predict by any system designer. Many present-day AI systems already demonstrate forms of opacity and unpredictability that raise important questions about transparency and therefore accountability. This has led authors such as Yuval Harari to allude to a world in which humans no longer dominate but rather share the world with AI, trusting the algorithms to make the correct decisions on their behalf. Given this direction of travel, is it then possible or even necessary that we should be willing to assign the role of independence and moral/ethical agency to AI systems? There is no doubt that some of the decision-making assigned to these advanced systems will have moral consequences. For example, self-driving vehicles or self-firing weapons operated by AI will be called upon to make decisions that can have tremendous moral consequences including the potential loss of life. In such situations, the central ethical question is not whether the machine can technically make a decision, but rather who should bear responsibility for the consequences of that decision. In this context, is it acceptable to assign the moral responsibility of such decisions to an autonomous AI rather than the organisations and people responsible for their initial design, construction and deployment? But, how can a system that is not conscious and therefore - by definition, does not understand the implications of what it does and merely executing what it is programmed to do take responsibility for its actions? This is clearly a treacherous ethical minefield, and at present there does not seem to be a clear consensus amongst ethicists or policymakers. Whilst the industry surges forward in developing the technology, the ethical and governance framework lags behind, trapped within tentative philosophical discussions and idealistic grandstanding.

Equity and fairness are important facets in the ethical argument for or against any technological advancement. It is therefore necessary to consider how a world run by advanced AI systems could affect existing social inequalities. A ruthless pursuit for stable supply chains at the lowest possible cost by developed economies and the expressed desire for social dominance by some sections of society make these considerations urgent and fundamental. Is it possible that the pursuit of these warped objectives can reach a level of unprecedented efficiency in a world run by AI? The recent statement by a senior official of the State Department in the USA “US won’t repeat China Trade mistake with India” (Reported on 6th March 2026) and the strategy of bombing sovereign states into submission, underscore the prevailing mindset among some powerful players shaping international relations.

In the development of science and the philosophy of science, the dominant approach in the Global South (with some notable exceptions) has been one of reproduction and passive consumption. In other words, the ideas and products created in advanced western economies have been cloned, modified, reproduced and consumed ad-lib by the countries in the Global South. Despite frequent rhetorical references in popular media and podcasts/blogs to a bygone era of wisdom - driven by intellectual originality and a culture of ‘seeking’, consumption rather than creation has defined the role of the global south. The underlying reasons are understandably complex and beyond the scope of this article. However, for countries like Sri Lanka, almost 80 years after the end of the colonial period, there is a need to re-evaluate this dependency. The development of AI technology in our asymmetric world brings a sense of urgency to the required paradigm shift. Addressing this challenge will require deliberate investment in local data ecosystems, interdisciplinary research capacity, and regional collaboration. There is therefore an unprecedented need for countries in the Global South to prioritise a collective regional approach, thereby pooling resources and sharing datasets to make meaningful contributions in shaping the debate. Despite some early initiatives and encouraging preliminary steps, tangible actions on the ground are few and far between with most initiatives being limited to conferences and board rooms thus far.

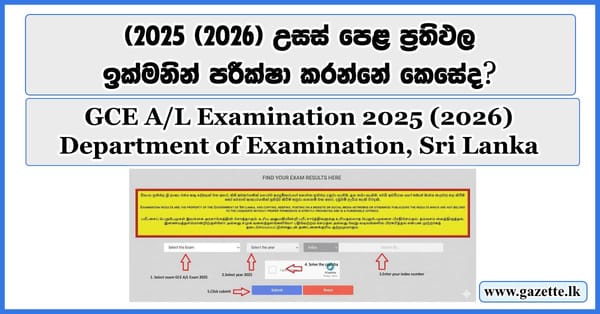

Unless local knowledge, literature and experiences are captured and presented in a suitable format there is a risk that AI outputs will be biased and promote distorted social theories. In response - at a national level, some countries (including Sri Lanka) have begun developing AI strategies and governance frameworks to ensure that technological developments align with local imperatives. The ‘Noolaham’ project operating in Jaffna and funded by members of the diaspora community, aimed at digitising local Tamil literature, is an outstanding example of this response. As part of this project to date, over 120,000 printed documents and over 35,000 multi-media works have been digitised and made available on the ‘Noolaham’ online library. Furthermore, Sri Lanka has recently adopted a national AI strategy with the ambition of positioning the country as a regional hub emphasising collaboration among government, academia, and industry. In response institutions such as the University of Peradeniya have adopted a proactive public engagement strategy targeting schools. Overcoming the narrow sectarian mindset that divides these countries - along linguistic, religious and tribal lines, must become a priority if these promising initiatives are to deliver the expected outcomes. Such a collective approach is clearly a major political priority in the entire region if these communities are to avoid a second wave of subjugation in a world run by AI. Establishing publicly funded regional AI governance platforms/hubs in South Asia, Africa, and South America could be the first step towards the global south meaningfully and independently shaping the AI revolution.

The ideas expressed in this article are the personal opinions of the authors and do not represent the views of the Universities of Manchester or Peradeniya on the subject.