By Mahesh Nirmalan, MBBS, MD, FRCA, PhD, FFICM & Roshan G. Ragel, PhD

Mahesh Nirmalan is Associate Vice President for Responsible Research Practice at the University of Manchester, UK. Roshan G. Ragel is Professor of Computer Engineering at the University of Peradeniya, Sri Lanka.

It is widely recognised that the impact of Artificial Intelligence (AI) on human societies will be profound and is likely to impact all facets of human life. It has been described as the point of “technological singularity” - a moment in human history when the exponential technological progress leads to the ending of human affairs as we understand them today. Recognising the huge opportunities and risks of AI systems, UNESCO in 2021 published a series of recommendations aimed at the ethical use of AI. The core values underpinning these recommendations are that AI systems should:

Respect, protect and promote human rights, fundamental freedoms and human dignity.

Ensure that the environment and ecosystems flourish.

Ensure diversity and inclusiveness in human societies.

Ensure that humanity can exist and thrive in peaceful, just interconnected societies.

UNESCO’s recommendation provides the current and most widely accepted ethical framework position for AI. Even in 2025-2026, global AI governance discussions – including those at UN summits and UNESCO’s Global fora, point back to the 2021 recommendations as the de-facto ethical baseline.

While ambitious, UNESCO recommendations are non‑binding, leaving implementation to national governments, regulators, and industries. The gap between aspiration and practice becomes clear when ethics confront real‑world pressures such as market competition, geopolitics, and technological acceleration. There can be no doubt that the core values outlined in the UNESCO document should underpin all our actions, whether as individuals, societies, governments, or larger inter-governmental agencies. However, to what extent these noble principles can be applied to the modern world with rising populations and diminishing resources remains unknown. UNESCO’s thinking in this area does not reflect the fundamentals of ‘human condition’ characterised by self-preservation, self-promotion, self-advancement, and survival of the fittest. In a market economy that prioritises productivity, profits and share-holder dividends, to what extent can the lofty values outlined by UNESCO regulate the development and application of AI systems remains an open question. The way the world has moved on since the publication of the guidelines in 2021, with powerful countries asserting their rights for superiority, and the ethos of ‘might is right’ does not give room for much optimism. The wars in Eastern Europe, Middle East and the enforced regime change in South America emphasise the new rules of engagement. The demands made by governments to private AI-Tec companies for unfettered access to AI systems for military use, including for triggering offensive action without human intervention (reported by BBC News on 25/02/26), take these concerns to new heights, and in this context, the recommendations made by UNESCO stand out for their naive optimism.

UNESCO, quite correctly, emphasises the need for human oversight in mitigating against the risks inherent in AI technologies. Human oversight in this context is not limited to individual human oversight but rather more inclusive public scrutiny where appropriate. Paragraph 36 states “It may be the case that sometimes humans would choose to rely on AI systems for reasons of efficacy……but an AI system can never replace ultimate human responsibility and accountability. As a rule, life and death decisions should not be ceded to AI systems.”

But the expression “life and death situation” is subjective and lacks practical value. The life and death situation in the life of a rural farmer in Asia is very different to that of a technocrat based in the Silicon Valley, and in this context, who is to determine what is a “life and death situation”? What is undeniable however is that the AI revolution is about increasing efficiency and productivity of systems – across all sectors, by augmenting human labour and human thought with robots and algorithms. The imperatives of the executives who design these systems – usually to maximise profits and shareholder dividends by reducing labour costs, do not always align with the imperatives of large segments of society dependent on the wages for their labour. In fact, common sense dictates that it is difficult (if not impossible) to reconcile these two sets of imperatives without a fundamental shift in societal mindset.

The human oversight of AI systems alluded to in the above paragraph also raises several other important questions. The cognitive disengagement that would follow the widespread adoption of AI tools in education and training will have secondary consequences on the future workforce’s ability to independently curate the outputs from AI systems. Examples of cognitive disengagement may be found in the overwhelming dependence on technology even to solve the simplest tasks in mental arithmetic needed to navigate transactions in a corner shop. Given this tendency for cognitive disengagement, what are the realistic possibilities that humans will be able to curate complex AI systems using their own skills to obtain, synthesise and critically evaluate new information? It may be argued that by integrating AI into education, it will be possible to create a new cohort of workforce experienced in algorithmic thinking. By learning to interrogate AI outputs through systematic questioning, this future workforce may develop a more sophisticated ability to spot biases and inaccuracies that older generations might miss. This, however, remains an unproven conjecture in the wake of evidence exemplified by the impact of calculators on mental arithmetic. Even if this was to be proven true, it is feared that the new roles created in modulating and curating AI will be hardly adequate to replace the large numbers of jobs and positions that AI may displace.

Several solutions have been proposed for addressing the potential for mass unemployment that may follow widespread adoption of AI in industry. Universal Basic Income (UBI) where a recurrent payment is made to all citizens irrespective of employment status, Universal Basic Services (UBS) where the state ensures essential services (such as health care, transport, internet and housing) irrespective of employment status and Negative Income Tax where those earning below a certain threshold of income would receive state supplements are some of the solutions being actively debated to mitigate against such scenarios. However, an essential component of human wellbeing is the need for ‘purpose’ in life and the satisfaction derived through fulfilling such purposes. This need, built into the very fabric of humanity, cannot be replaced by ensuring UBI, UBS, or negative taxation alone. International standards derived from the Human Rights Charter define the “Right to Health” as the right to achieve the highest attainable level of physical, mental and social well-being. ‘Purpose’ and ‘meaning’ are undeniably important facets of mental and social well-being and have to be important components of any debate on the ethics of AI. The debate cannot, therefore, be dominated by monetary considerations alone.

The UNESCO guidelines go on to say, “none of the processes related to the AI system life cycle shall exceed what is necessary to achieve legitimate aims or objectives”. It, however, does not say who defines what can be the ‘legitimate aim’ in any given political, social or industrial context. Can the need of a powerful nation to secure a stable supply chain for raw materials or rare earth minerals, at the lowest possible cost - irrespective of the needs and sensitivity of the countries where such resources are to be obtained, be considered a legitimate aim? Furthermore, the guidelines state, “AI actors should promote social justice and safeguard fairness and non-discrimination of any kind in compliance with international law. This implies an inclusive approach to ensuring that the benefits of AI technologies are available and accessible to all, taking into consideration the specific needs of different age groups, cultural systems, different language groups, persons with disabilities, girls and women, and disadvantaged, marginalized, and vulnerable people or people in vulnerable situations”. This is clearly a highly laudable recommendation. However, the number of times International law has been violated by global players – both in the global south and in the global north- makes one question what action - if any, can be taken when international laws are violated in pursuit of commercial and geo-political interests. What guarantees do we have that the global agencies - that have largely been powerless in preventing wars and overt violations of human rights in many countries, will ever be in a position to legislate or prevent the exploitatory practices in the development and deployment of AI?

The UNESCO guidelines emphasise the need for human oversight in instances where decisions are understood to have an impact that is irreversible or difficult to reverse. The challenge, however, is that virtually every AI application has the potential to cause irreversible changes in how human societies function. Human societies are highly interdependent, with nonlinear interactions among their components. It is well known that in such self-organising systems, even the smallest perturbation caused by an external factor can cause significant alterations in the trajectory of the entire system – known as the Butterfly effect. Given this basic fact, how the widespread adoption of AI systems will alter the socio-cultural-political and economic balance is almost impossible to evaluate by any human system within the relatively short time span available. Attempts to regulate will invariably be seen as impeding development and scientific progress by Institutions and even governments concerned about losing a slice of the AI Pie.

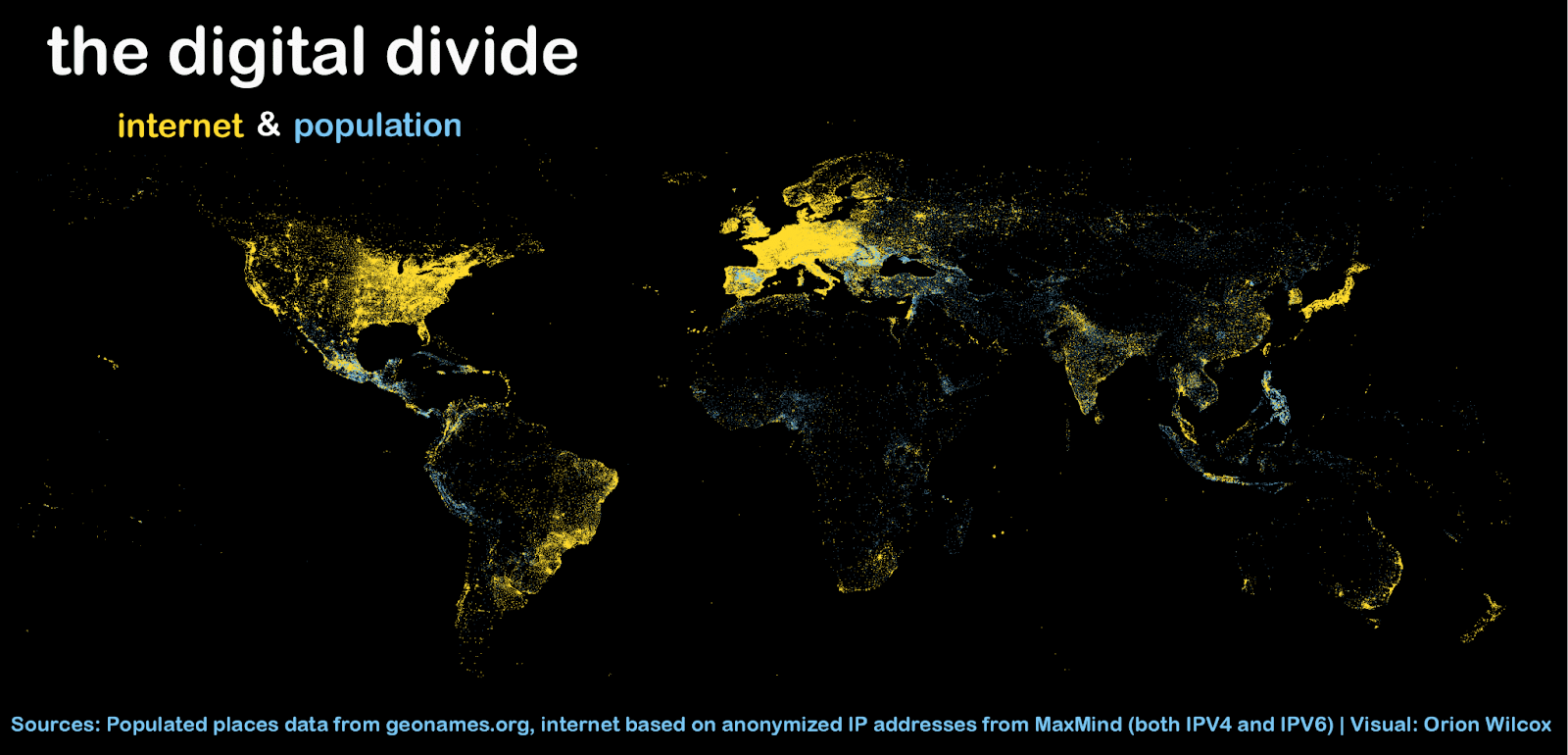

UNESCO also emphasises the need for fairness and inclusivity and states, “Member States should work to promote inclusive access for all, including local communities, to AI systems with locally relevant content and services, and with respect for multilingualism and cultural diversity”.

AI systems are only as good as the data sets that are used to develop, validate and calibrate the relevant algorithms. It is, however, well known that in large parts of the world - mainly across the global south, the current systems to capture locally relevant data are extremely weak or even absent. In the health sector, even in low middle income countries such as India and Sri Lanka, record keeping is poor outside some elite corporate or semi-government Institutions. The situation in poorer countries in Sub- Saharan Africa is even more patchy. These countries and communities are several years, if not decades, behind the growth curve in creating high fidelity data sets that can feed into developing locally relevant AI systems. There is no doubt that countries such as India have, in recent times, made significant progress in this respect. Electronic Health Records (EHR) in India have rapidly evolved through the Ayushman Bharat Digital Mission (ABDM) launched in 2021 to create a unified, interoperable digital health ecosystem. Key components include the Ayushman Bharat Health Account (ABHA) card for unique identification and the adoption of national EHR standards, such as SNOMED-CT to ensure standardized, accessible, and secure digital medical history. But such systems remain a pipe dream for many other countries in South Asia, Africa and Latin America as the respective governments lack the vision, courage and resources to move in this direction.

It is clear that the AI revolution is not a distant prospect, and the days of horizon scanning are over. Whilst the power of AI and the potential new opportunities it could create are exciting, the very real challenges in the potential destabilisation of some societies cannot be ignored. The pace of growth and the need for large (and small) players to outstrip their competitors for a slice of the AI pie aided and abetted by governments openly calling for the return to a “golden era” of dominance, implies that the future, driven by AI, can have an extremely destabilising impact on societies and countries who fall outside an elite grouping. In particular, algorithmic bias (inadvertent or otherwise) can produce outputs that can systematically undermine societies through distorted social theories based on “automated truths” that are self-perpetuating. If poorer countries are to survive this challenge, strong action is needed at national and regional levels so that resources can be pooled to tackle these emerging challenges. Overcoming internal differences through an inclusive political narrative must be prioritised so that countries and regional groupings are more effective in producing locally relevant high fidelity data sets. Priority for organisations such as UNESCO is to develop practical and honest recommendations, taking into account the realities of power asymmetry within a market-driven economy. Idealistic grandstanding based on lofty human ideals is not useful in facing up to the very real challenges outlined above.

Note: The views expressed in this article are the personal views of the authors and do not necessarily reflect the views of the Universities of Manchester and Peradeniya on the subject.